What Is Text-to-Video AI? How It Works in Plain English

Text-to-video AI is exactly what it sounds like: you type a description, and software produces a finished video. Two years ago this was a research demo. Today, tools like Sora, Runway, and Framesurfer ship production-ready clips with narration, captions, and music. This article explains every layer of the technology in plain language so you can evaluate whether it fits your workflow and understand what is actually happening when you press generate.

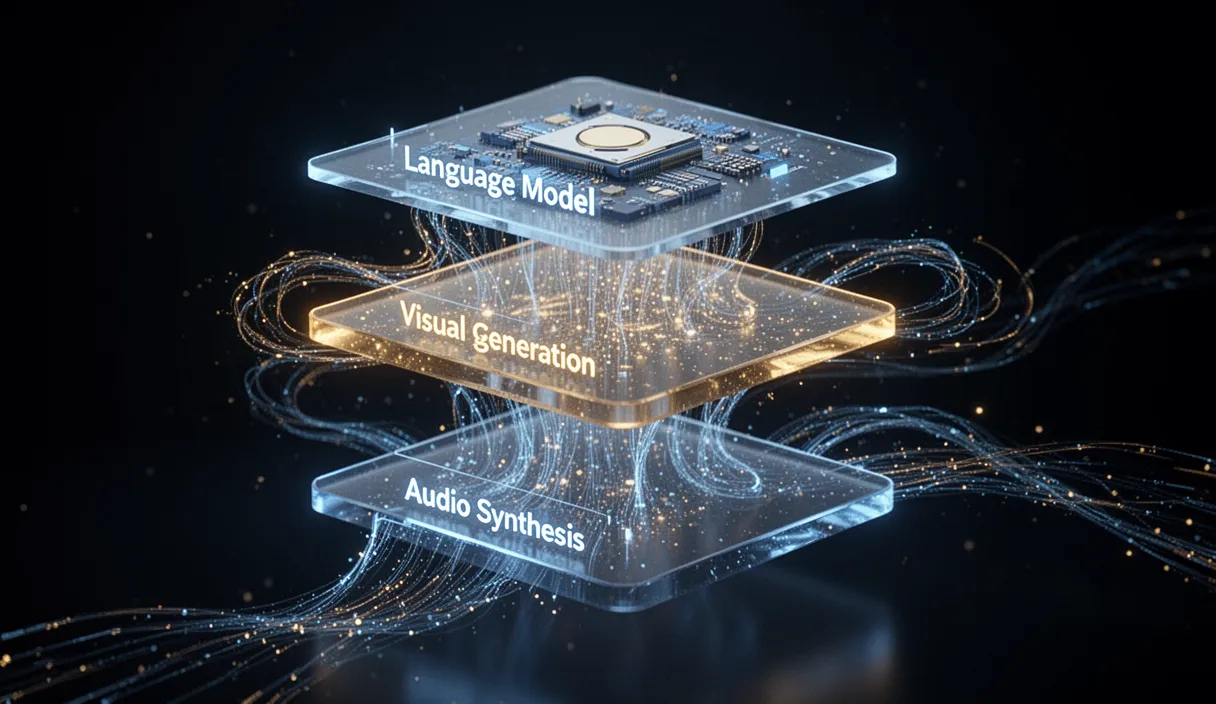

The Three Layers of Text-to-Video AI

How Diffusion Models Turn Text Into Moving Images

Current Capabilities: What You Can Actually Make Today

Practical Use Cases Across Industries

Limitations and Where the Technology Is Heading

Conclusion

Text-to-video AI is a layered pipeline combining language models, diffusion-based visual generation, and audio synthesis. It is not magic and it is not perfect, but it is genuinely useful for producing narrated, captioned video content at a speed and cost that was impossible two years ago. Understanding how each layer works helps you write better prompts, choose the right tool, and set realistic expectations for what you will get.

Frequently Asked Questions

What is text-to-video AI?

Text-to-video AI is a technology that converts written descriptions into finished video content. It uses a combination of language models to plan scripts, diffusion models to generate visuals, and text-to-speech systems to add narration. The result is a complete video with visuals, voiceover, captions, and music produced from a text prompt.

How long can AI-generated videos be?

Most tools produce videos between 30 seconds and 5 minutes. Each individual generation is typically 2 to 12 seconds, but platforms like Framesurfer chain multiple clips together with transitions and narration to create longer, cohesive videos. Some tools support up to 10-minute outputs.

Is the video quality good enough for professional use?

For social media, YouTube, educational content, and marketing videos, current quality is production-ready at up to 1080p. For broadcast television or cinematic work, the technology is not yet a replacement for traditional production, primarily due to limitations in fine detail rendering and physics accuracy.

Do I need technical skills to use text-to-video AI?

No. Most modern tools are designed for non-technical users. You type a description of what you want, and the system handles scripting, visual generation, narration, and editing. Writing a clear, specific prompt is the main skill that improves results.

How much does text-to-video AI cost?

Pricing varies widely. Some tools offer free tiers with watermarks or limited resolution. Paid plans typically range from $10 to $50 per month for moderate usage, with per-video or credit-based pricing for heavy users. Enterprise plans with API access can cost significantly more.

Related Articles

Ready to create?